Permanent Secretary objectives for 2015–16 – the measures against which the country’s most senior civil servants are supposedly judged – were quietly published in February. Ollie Hirst and Gavin Freeguard find the latest set lacking.

We were critical of permanent secretary objectives when they were first published in 2012–13, and then again in 2013–14. There were too many objectives and not enough measures. They were published too late into the year they applied to. There was too much inconsistency for them to be useful in performance management. And there was a ‘Christmas tree’ effect, as far too many things were hung on them.

The 2014–15 objectives were noticeably better. Most obviously, a review by Mark Lowcock, Permanent Secretary at DfID, formed the basis for a more sensible approach, introducing a new format that felled most of the Christmas trees.

So, do the 2015–16 objectives continue that improvement or do they revert to the meaningless? Unfortunately, it seems to be the latter.

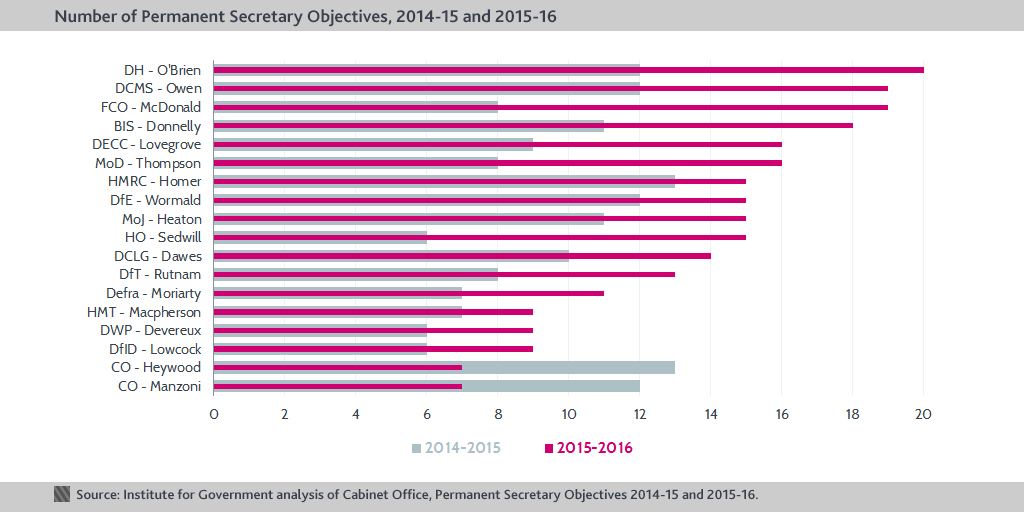

The number of objectives for each permanent secretary varies between seven and 20; the average number has risen from nine in 2014–15 to 14 in 2015–16.

In 2014–15, the average number of objectives per permanent secretary was nine – more sensible than the average of 18 in 2013–14. This year, however, the average number has risen again, to 14. Una O’Brien (DH) has 20, while Sue Owen (DCMS) and Sir Simon McDonald (FCO) have 19. Only Sir Jeremy Heywood (CO) and John Manzoni (CO) have fewer objectives than last year.

In part, the increase in the number of objectives reflects the new diversity objectives which were first announced in 2015; there are 44 of these across the 18 permanent secretaries. But this does not fully explain the increase; on average, permanent secretaries have five more objectives than last year, while each only has an average of 2.5 diversity objectives.

Sir Jeremy Heywood and John Manzoni – who both have fewer objectives than last year – appear to have divided up the Cabinet Office objectives, with Heywood taking the ‘business’ objectives and Manzoni the ‘strategic’ ones. Some departments are inconsistent with others in how they group the objectives. For example, Devereux (DWP) combines business and strategic objectives, while McDonald (FCO) uniquely uses categories from 2014–15.

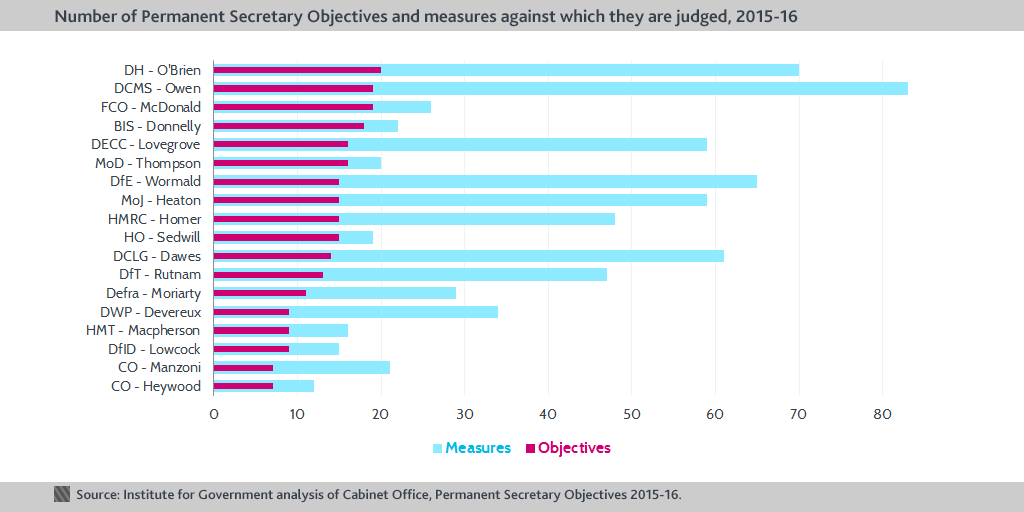

Four permanent secretaries have more than 60 measures of progress.

The number of measures against the objectives has spiralled. The average number of measures this year is 39, up from 15 in 2014–15, a ludicrously high number that suggests that the measures are now the Christmas tree on which all variety of tasks and asks are being hung, rather than the objectives themselves. In short, they are being used rather as the objectives were in previous years. Four permanent secretaries have more than 60 measures against their objectives: Owen at DCMS (83), O’Brien at DH (70), Wormald at DfE (65) and Dawes at DCLG (61).

There are other problems, reflecting the inconsistency and deterioration of the objectives as a whole.

Departments are inconsistent in how they format and organise their objectives. They confuse measures, milestones and means of reaching them. The inconsistency across departments and the sheer number of objectives questions how useful and usable they are – and crucially, whether they are actually being used to measure performance (one of the FCO’s objectives was to raise engagement scores to ‘x%’).

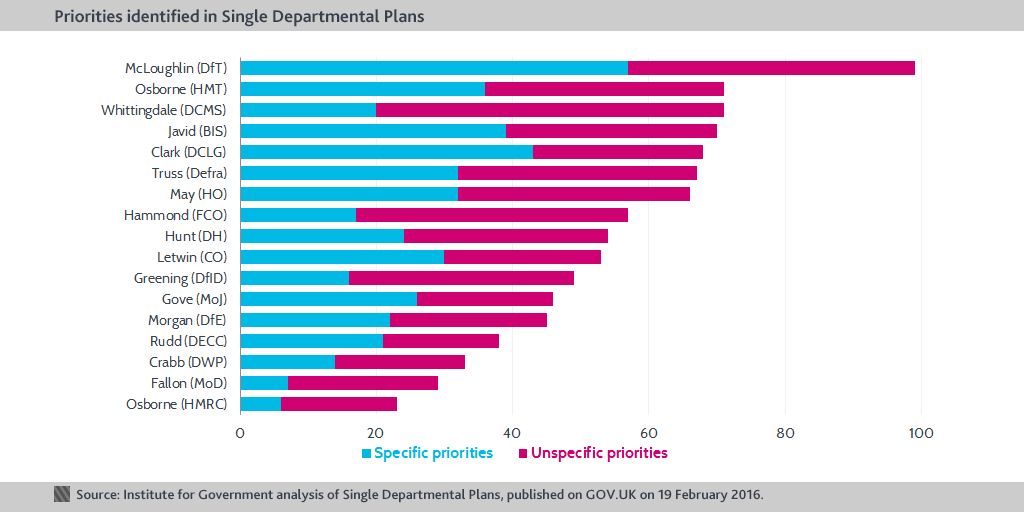

The 2015–16 measures have also been published very late into the year they are supposed to measure – February 2016, for measures extending from April 2015 to March 2016. This may be in part due to the change in government or it could owe something to the (belated) publication of Single Departmental Plans (SDPs) on the same day in February 2016. Indeed, a number of the objectives explicitly reference the SDPs as their source, even though the SDPs were only finalised and published with three months of the year the objectives supposedly refer to remaining.

It has been suggested that in future, permanent secretary objectives may be aligned with the SDPs; but (as we have previously argued) these are merely ‘a laundry list of nice-to-haves, giving no sense of ministerial priorities’. Until the SDPs that are in the public domain are of higher quality, linking the permanent secretary objectives to them risks worsening the objectives further.

The objectives are published in PDF format, making them more difficult to analyse (many people might not see the text-heavy objectives as ‘data’ which should be published in an open format). We have made them available in .xls format to make them more user-friendly. Although, given the lack of consistency and the sheer number of measures against them, there is a limit to how user-friendly the objectives can be. The 2016-17 objectives will need to give a much clearer sense of priorities to be useful.